Biometric systems are becoming the basis for a wide range of identification and profiling solutions in the fields of security and defense and they are being proposed by governments and businesses across the world.

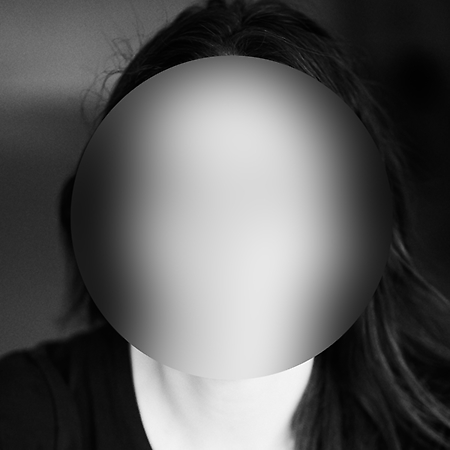

AI companies claim that their technologies can analyze the physical characteristics of a person’s face and thus predict subtle patterns of “suspect” personality types. Inspired by the recent psychometric research papers who claimed to use an AI to detect the criminal potential of a person based only on a photo of his face, and taking the world of firearms as a starting point, we present a “physiognomic machine”, a computer vision and pattern recognition system that detects the ability of an individual to handle firearms and predicts his potential danger from a biometric analysis of his face.

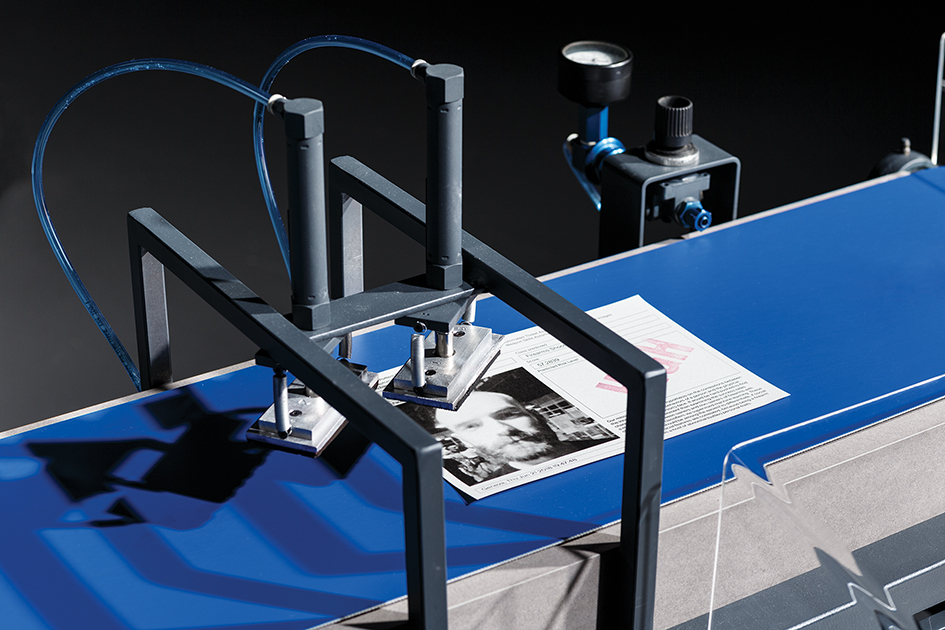

The device is based on a camera-weapon that captures faces as well as a machine with artificial intelligence and a mechanical system that classifies the profiled persons into two categories, those who present a high risk of being a threat and those who present a lower risk.

Between fiction and reality, this installation exposes the biases and politics behind these problematic AI Facial Profiling systems used to classify humans which reinstates old forms of social and racial discrimination, thereby legitimizing power relations through technology.

From an experience inspired by the protocols of security infrastructures, we take the individual as the starting point for a critical reflection about algorithmic biases; a narrative that refers to the trust and legitimization of empowering decision-making of intelligent artifacts.